Multimodal Video Understanding: The Distance from Possible to Reliable

When we tried to make AI “understand” an ad video, we found the problem goes far beyond calling an API.

Why Use AI to Analyze Video Creatives?

There’s a long-standing problem in advertising: the causal relationship between creative and performance is essentially a black box.

We know how much an ad cost and how many conversions it drove, but it’s hard to answer why one ad outperformed another. Was it the hook in the first two seconds? The talent’s delivery? The CTA wording? Traditional approaches rely on manual tagging — expensive, subjective, and impossible to scale.

Multimodal large models have, for the first time, made it possible to automatically and structurally understand video creatives.

But “possible” and “reliable” are very different things.

The Capability Boundary

Current multimodal models can accept video files directly, understand temporal narrative, reason across modalities (visual + text + audio), and even output structured JSON against a schema. Two years ago, none of this was feasible.

But in production, we encountered a few fundamental tensions:

Precision vs. semantics. Models are good at semantics — they can tell you “there’s a product close-up in the middle of the video” — but struggle to pinpoint exact time ranges. Their sense of time is narrative, not frame-accurate. You can’t ask a model to do both segmentation and understanding.

Global vs. detail. Feed a model a 30-second video alongside dozens of analysis fields, and it will prioritize the big picture while losing granular details. Ask for too much at once, and the model’s attention spreads thin.

Probabilistic output vs. deterministic needs. Large models are probabilistic by nature. Even with temperature at zero, two runs on the same video may produce different values for certain fields. The model doesn’t truly “understand” your schema constraints — it makes probabilistic best guesses. It will confidently give wrong answers, and won’t necessarily say “I don’t know” when it should.

These aren’t bugs. They’re inherent characteristics of this technology paradigm. Better models will narrow the gap, but these tensions won’t fully disappear.

Engineering Matters More Than Model Selection

Once you accept these boundaries, the real question becomes: how do you build a system that needs determinism and consistency on top of a capability that is probabilistic and fallible?

We arrived at a few design principles:

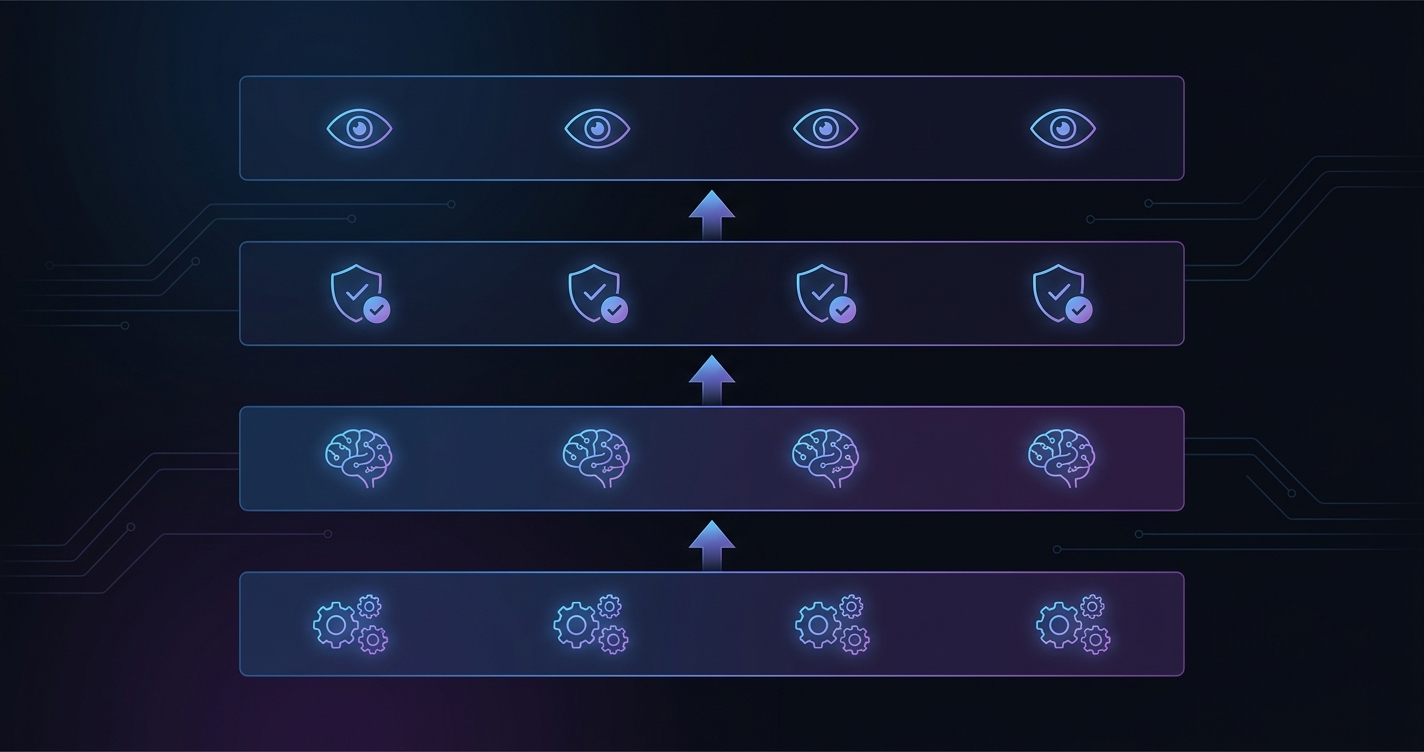

Let each layer do what it’s best at. Traditional algorithms handle precise segmentation. The LLM handles semantic understanding. A rule engine handles constraint validation. Humans handle semantic definitions and quality standards. Each layer works within its competence, composed through clean interfaces. This is far more practical than expecting one model to solve everything end-to-end.

Divide and conquer over all-at-once. Rather than feeding everything to the model in a single pass, go global first, then local — one pass for the overall judgment and planning, another for per-segment detail extraction. Each pass has its own scope and constraints. This significantly improves detail accuracy.

Schema is a product contract, not a config file. If your analysis results feed downstream consumers (modeling, reporting, decision-making), schema stability and consistency are the real competitive advantage. Every schema change must be accompanied by updated prompt guidance and validation rules — these three are a single unit. Change only one, and the system becomes inconsistent.

Prompt is code, not comments. In our system, the prompt is longer than many core modules. It’s not “a note for the model” — it’s part of the system’s behavioral specification, defining how the model should reason, distinguish similar concepts, and handle uncertainty. Treat prompts as throwaway configuration and output quality degrades fast. Prompts deserve version control, code review, and first-class citizenship.

What “Understanding” Actually Means

When we say a model “understood” a video, what do we actually mean?

A model can identify “a person speaking to camera,” but may not reliably judge whether the style reads as UGC or brand content. It can see text on screen, but “is this a disclaimer or a user testimonial” is a judgment call it sometimes gets wrong.

What multimodal models currently provide is medium-granularity semantic understanding — deeper than keyword matching, shallower than a human expert. This distinction matters because it shapes how you should design the system: don’t expect the model to do everything. Place it within a framework that has constraints, validation, and fallbacks.

You need to rethink what “correctness” means — strict constraints on critical fields, tolerance for reasonable variation on ambiguous ones, post-hoc validation rather than upfront assumptions. This isn’t a compromise. It’s respecting the nature of the problem.

Closing Thoughts

Multimodal models have made many previously impossible things possible. But between “possible” and “reliable” there is still a long road.

On this road, the value of engineering design doesn’t diminish as models get stronger — quite the opposite. The more powerful the model, the more critical the engineering architecture around it becomes. Because you’re embedding a probabilistic, fallible, not fully controllable capability into a business system that needs determinism, consistency, and traceability.

That might be one of the most interesting challenges in AI engineering right now.